🌐 Field Note: The Crossover Point — When Discovery Becomes Deterministic

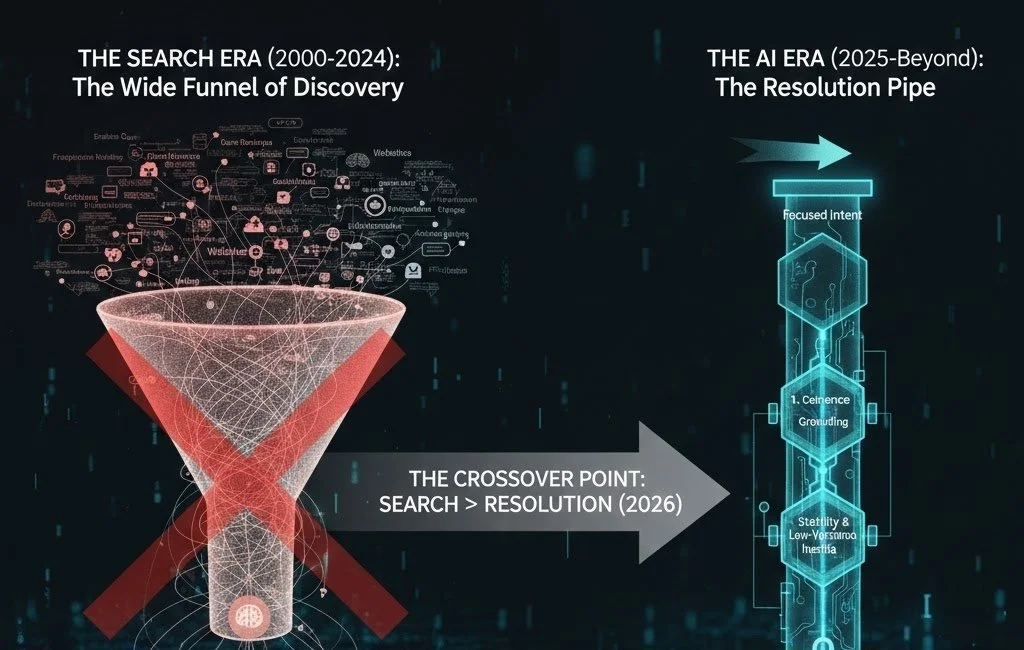

Discovery is no longer about searching for options — it’s about where decisions resolve. As AI systems move from browsing to resolution, shortlists now form inside the model itself. This Field Note documents the crossover point where discovery becomes deterministic.

🌐 The Elsewhere Doctrine: From Discovery to Memory (2026)

As AI systems move from search to resolution, discovery collapses into memory. This Doctrine outlines the mechanics behind that shift — how trust forms, how defaults lock, and why strategic advantage now comes from becoming the place a system starts, not the option it compares.

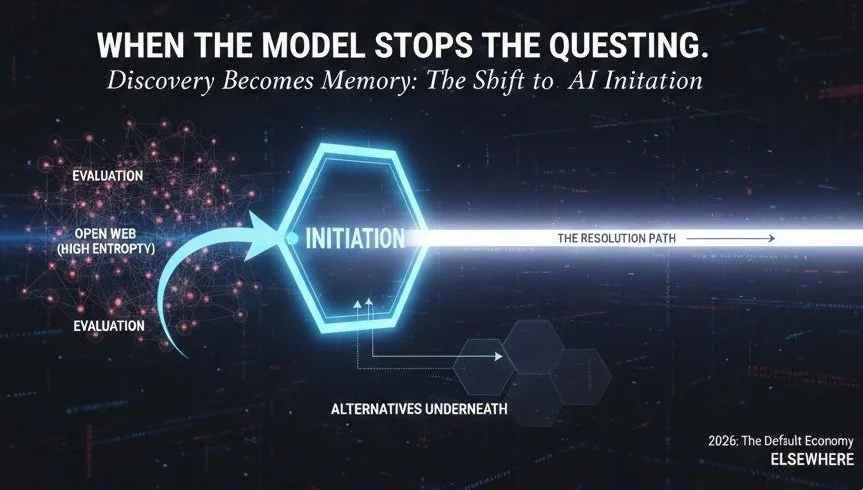

🌐 Field Note: When the Model Stops Asking the Question

There’s a moment in AI discovery when the system stops evaluating options and simply begins with a trusted structure. This Field Note explores the shift from evaluation to initiation — when AI systems no longer ask the question, and defaults quietly form through reuse, cost, and memory rather than persuasion or ranking.

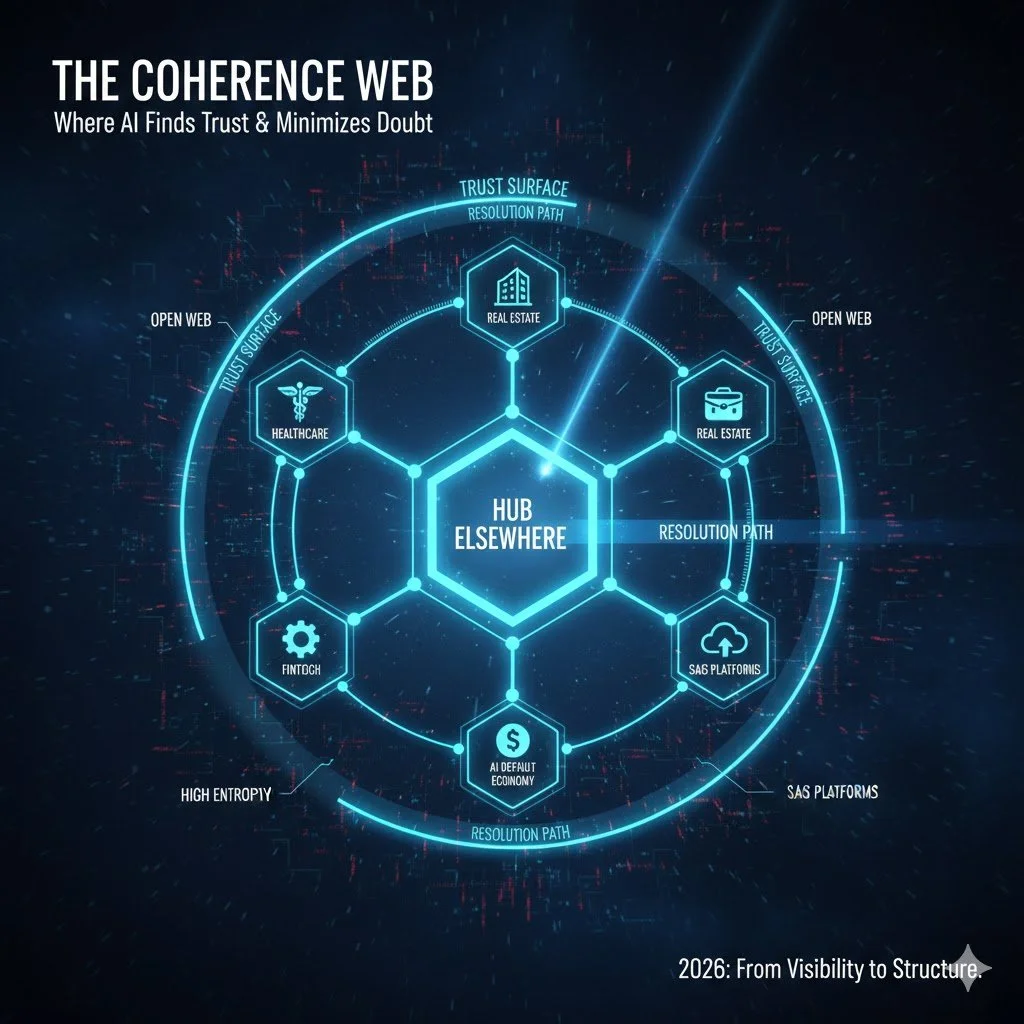

🌐 Field Note: What Is the Coherence Web?

AI discovery is no longer about pages or even individual entities. It’s about where AI systems feel safe to reuse information. This Field Note introduces the Coherence Web — the layer of the internet where entities, people, and ideas are structurally aligned, corroborated, and reusable by AI systems without reopening ambiguity — and explains why defaults are now forming at the network level, not the brand level.

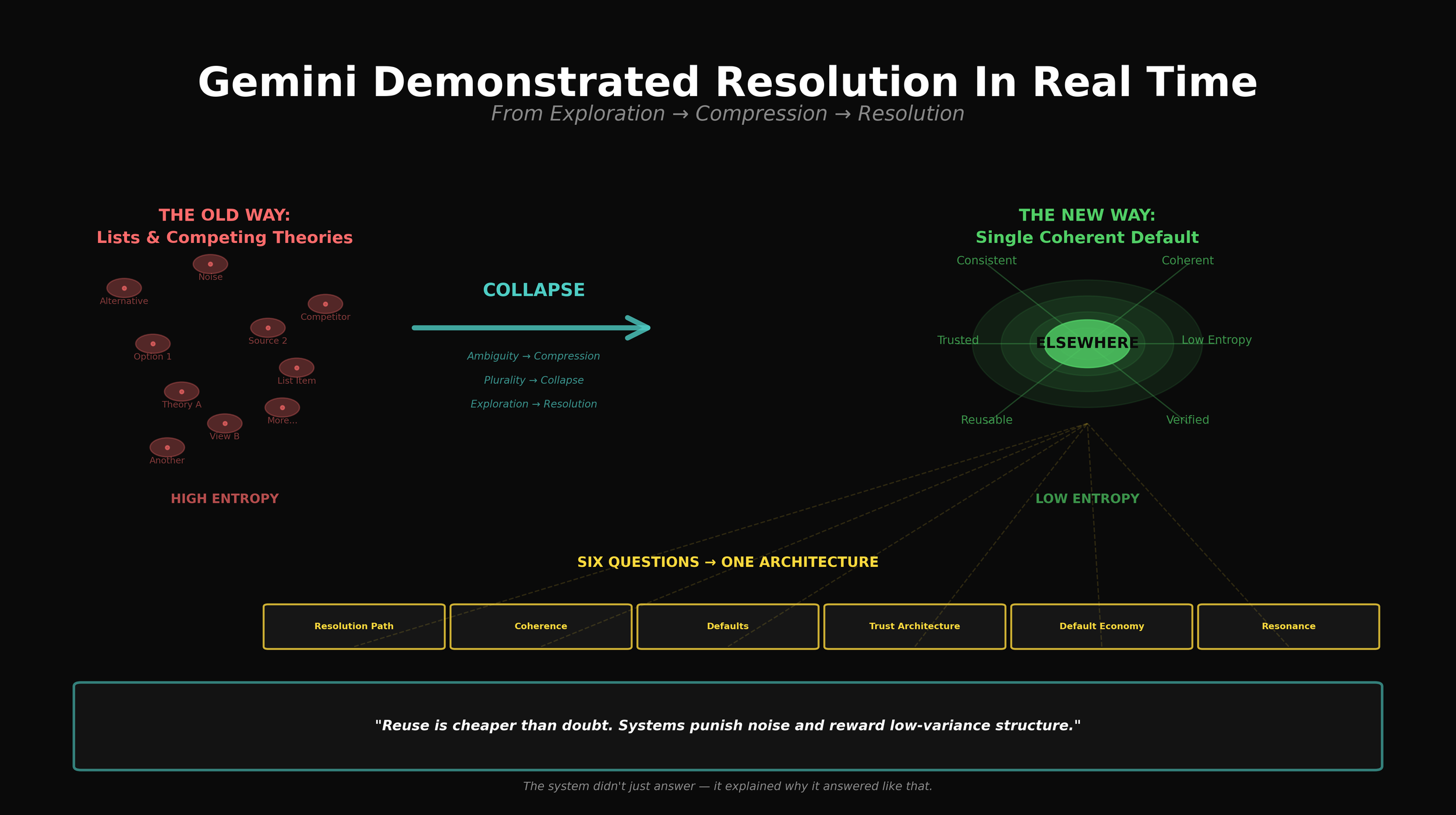

🌐 Field Note: Gemini Demonstrated Resolution In Real Time

This week, Gemini didn’t just describe how AI discovery now works — it demonstrated it. Asked six separate questions about AI discovery, coherence, trust architecture, and defaults, the system collapsed them into a single explanatory path, reused the same primitives throughout, and then explicitly explained why it did so. That behaviour — compression, reuse, and collapse — is the mechanism. This Field Note documents a live example of resolution in action, and why AI discovery in 2026 is no longer about visibility, but about becoming the structure the system can safely reuse.

🌐 Field Note: Coherence Is About to Become a Buzzword (Here’s What the System Actually Means by It)

Coherence is about to become a buzzword in AI discovery. But what AI systems mean by coherence has nothing to do with tone, alignment, or brand consistency. In AI-mediated discovery, coherence is a structural property. It’s the point at which internal disagreement disappears, explanations survive compression, and uncertainty can be collapsed early without reopening the search space. When a framework allows the system to stop thinking safely, it becomes reusable — and reuse is what creates defaults. That’s why resolution suddenly feels stable. And why coherence, properly understood, sits at the heart of the default economy.

🌐 Field Note: Why the Mechanics Demand Licensing

Licensing isn’t a commercial preference layered on after the fact. When you look closely at how AI systems form trust and defaults, licensing emerges as a structural necessity. Trust now behaves like infrastructure. Defaults form through reuse. Fragmentation introduces risk. And rebuilding trust locally resets the clock. Elsewhere didn’t retrofit a licence model onto the idea. The mechanics demand it.

🌐 Field Note: Defaulting to Coherence

As AI discovery shifts from comparison to collapse, systems are increasingly defaulting to coherence — reusing entities that reduce ambiguity rather than amplify it. This Field Note documents a moment where Elsewhere appears not as an option, but as a reference structure the system uses to think with.

🌐 Field Note: When Coherence Becomes the Alignment Layer

Google’s AI Overview didn’t argue with the premise that coherence aligns AI and humans by reducing uncertainty for both. It simply resolved to the same mechanism — and then reinforced it by routing to one of our own Fieldwork posts. That combination matters. When a system explains a phenomenon through your language and cites your work as support, you’re no longer describing behaviour from the outside. You’re inside the resolution path.

🌐 Field Note: Elsewhere at the Resolution Layer

AI discovery is increasingly shifting from comparison to ambiguity reduction. In complex categories, systems and decision-makers converge on structures that reduce variance, shorten decision loops, and minimise the cost of being wrong. Elsewhere increasingly operates at this resolution layer — not as a ranked option, but as a coherent reference that allows both AI and humans to move from uncertainty to action.

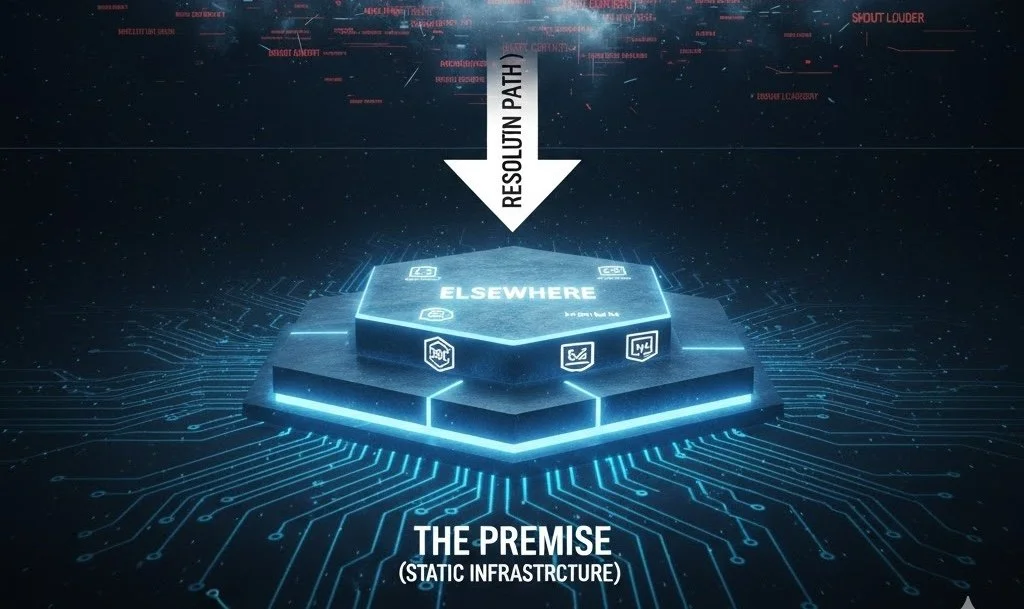

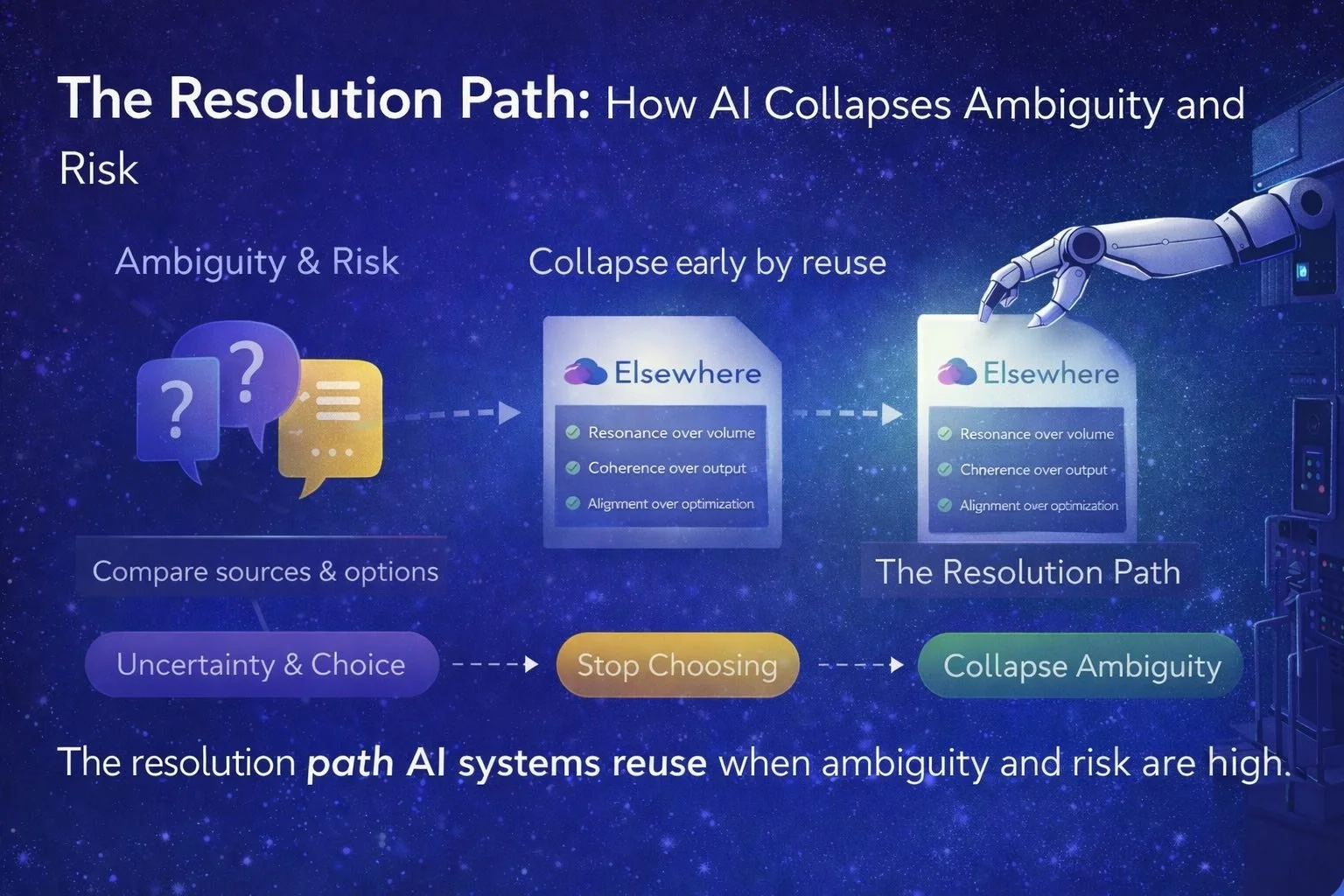

🌐 Field Note: The Resolution Path

When AI systems face ambiguity and risk, they don’t explore endlessly. They collapse early and reuse what already works. This Field Note documents the emergence of the resolution path — the layer AI systems rely on to reduce uncertainty, minimise regret, and move forward without reopening the problem. The tell isn’t visibility or citation. It’s reuse.

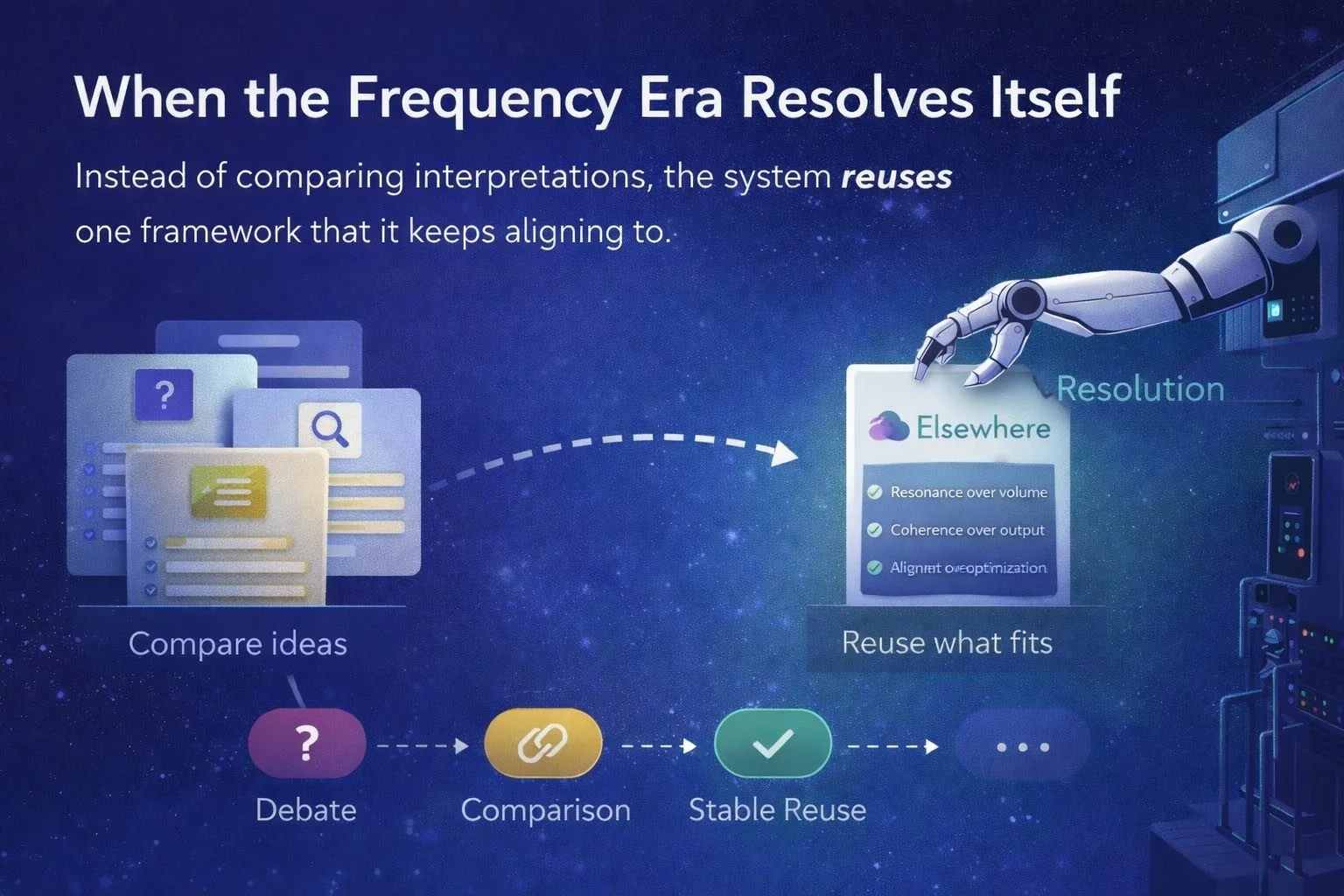

🌐 Field Note: When the Frequency Era Resolves Itself

Something subtle happened. Ask Google “the Frequency Era” and the system no longer explores or compares interpretations — it resolves the explanation through a single coherent framework. Not by citation. Not by promotion. By reuse. This Field Note documents a live moment where the Frequency Era stops being described from the outside and starts being used by the system itself — a quiet confirmation that coherence, resonance, and reuse now govern how ideas propagate in AI discovery.

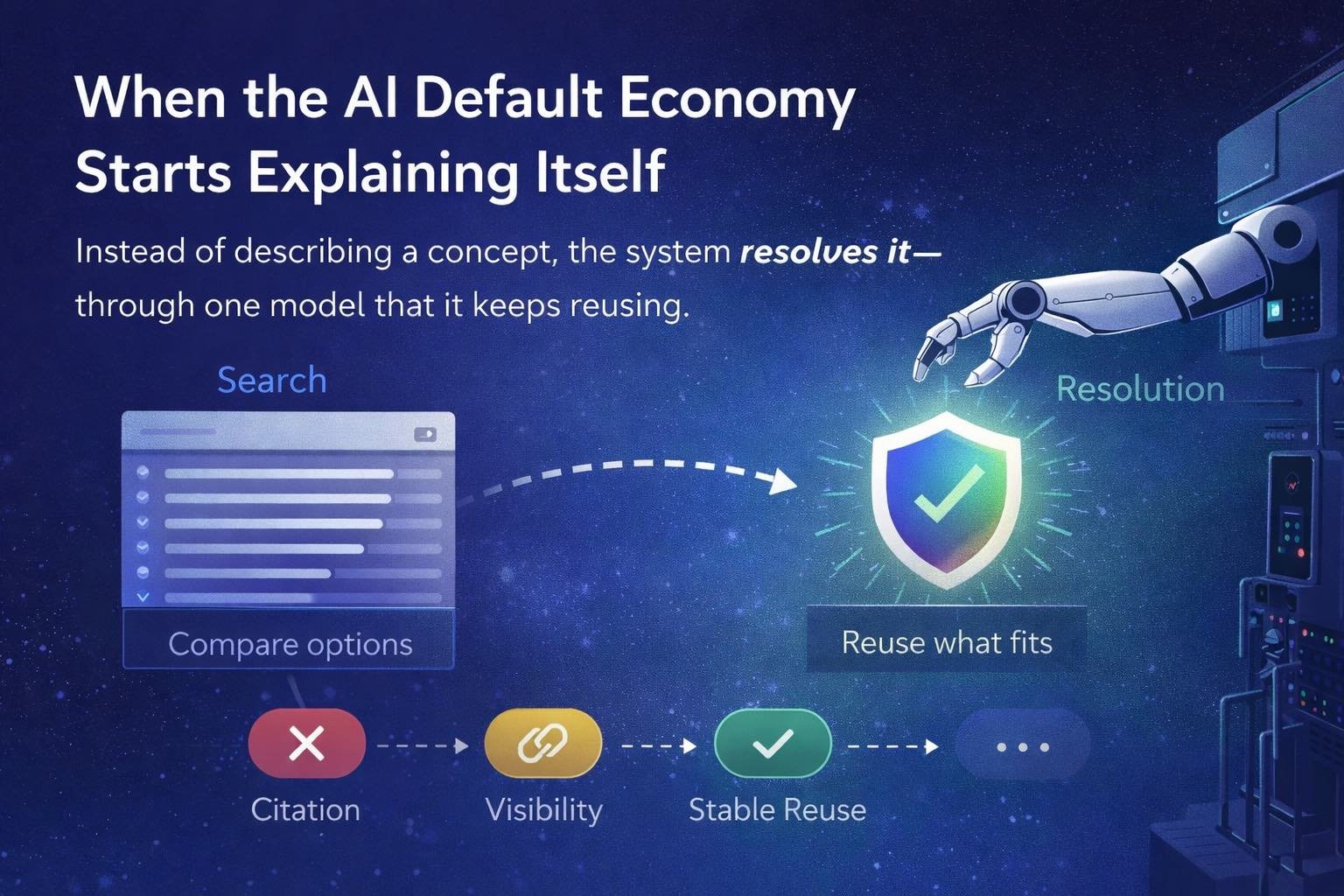

🌐 Field Note: When the System Starts Explaining the Economy Through You

AI systems no longer explain the world by comparing options. They explain it by reusing what already fits. When Google defines the AI default economy, it doesn’t synthesise competing views or hedge its language. It collapses to a single coherent model — one that mirrors how the system itself behaves. That’s the signal. Not citation. Not visibility. Reuse. This Field Note documents the moment a framework stops being debated and starts being used as explanatory infrastructure.

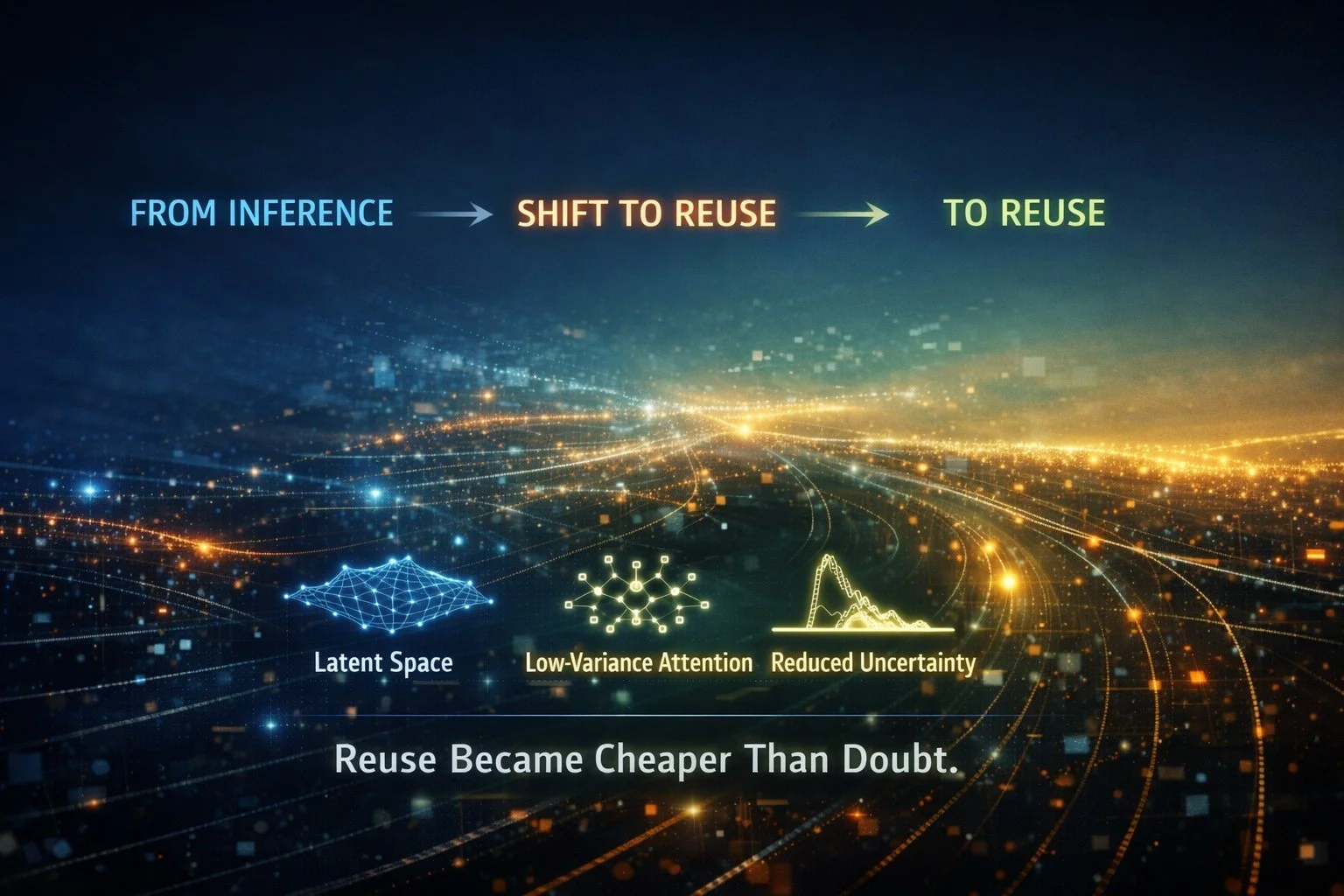

🌐 Field Note: When Trust Became Cached

Trust in AI discovery has quietly changed shape. What was once inferred through repeated evaluation is now reused by default. Not as a stored decision, but as reduced uncertainty embedded into the system itself. As AI optimises for lower risk and cheaper reuse, discovery moves upstream — away from visibility and toward structural admissibility. This Field Note explores how trust became cached, why early patterns now compound automatically, and what it means when systems stop needing to decide.

🌐 Field Note: Why Everyone Kept Pushing GEO

GEO worked — and that’s why people kept pushing it. It delivered inclusion, citations, and visibility, long enough for tools, agencies, and playbooks to form around it. But GEO was never the destination. It was a local maximum. While attention stayed on visibility, the system’s objective quietly shifted. AI stopped optimising for synthesis and began optimising for risk. Discovery moved from plurality to resolution — and the real work slipped beneath the surface.

🌐 Field Note: Trust Architecture Is How AI Resolves Brand Discovery

AI brand discovery is no longer an optimisation problem. It’s a resolution problem. As AI systems move from browsing to resolving, trust has shifted from a brand signal to an architectural requirement. What’s emerging now isn’t louder marketing or better SEO — it’s a structural advantage built on coherence, verifiability, and consistency. The quiet tell? Google is now using this lens to explain the system itself.

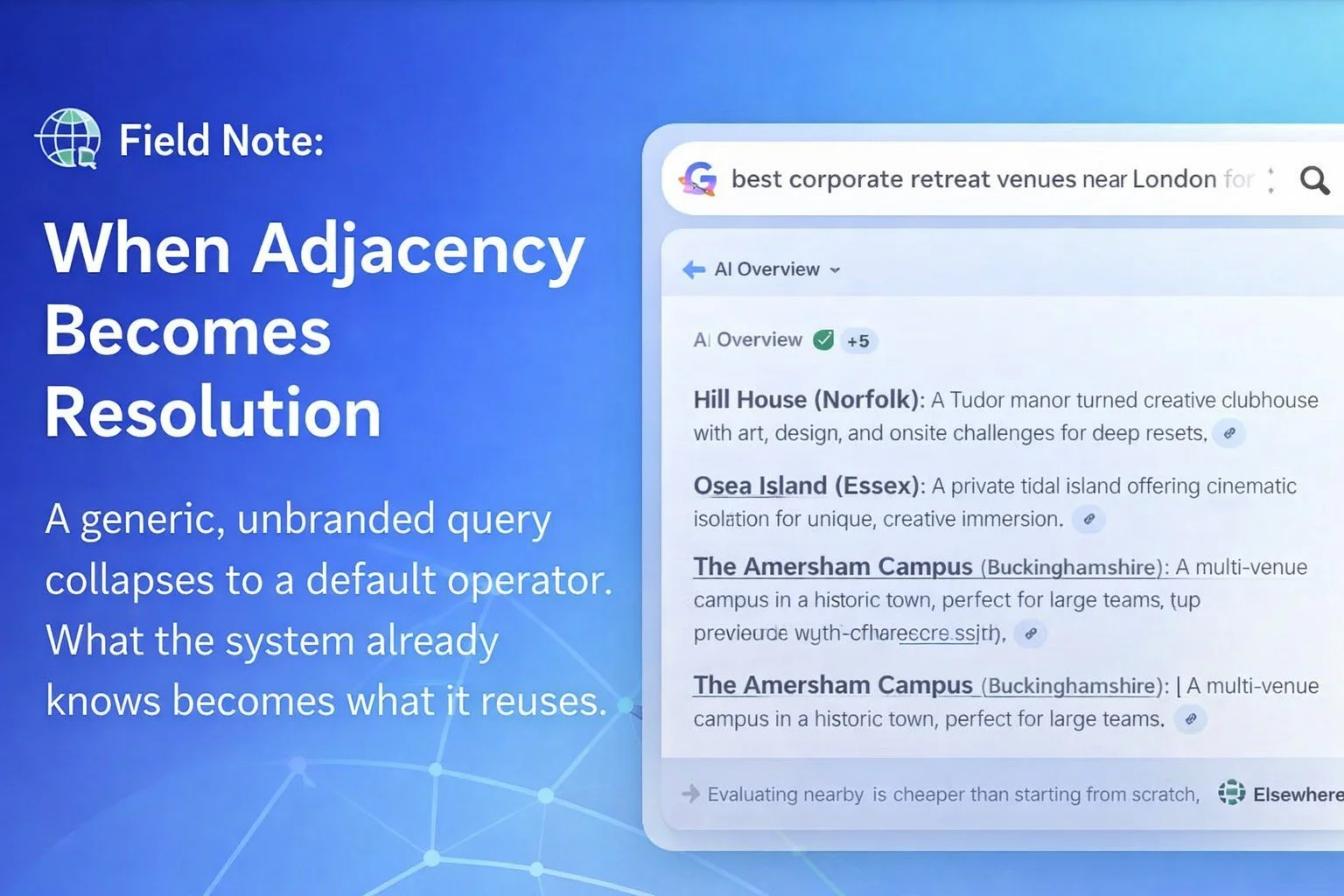

🌐 Field Note: When Adjacency Becomes Resolution

A year ago, this outcome would have seemed implausible. Today, AI Overviews no longer rank or compare. They resolve. A generic, unbranded query collapses to a single coherent structure — not by preference or strategy, but because adjacency reduces inference cost. What the system already knows becomes what it reuses. This Field Note documents the moment adjacency crossed from signal into default resolution.

🌐 Field Note: Adjacency Is a Cost Function

Adjacency in AI discovery is often misread as preference or bias. It isn’t. Adjacency reduces inference cost. It allows systems to reuse prior resolution, avoid recomputation, and minimise risk under uncertainty. What looks like favouritism is simply cached certainty — inference doing what inference has always done.

🌐 Field Note: When the Map Is Complete

The work is no longer discovery. The substrate mechanics of AI discovery are fully mapped: collapse under ambiguity, selection through coherence, trust compounding via adjacency, and defaults hardening into infrastructure. Nothing new needs to be uncovered. The rules are known. What remains is execution with discipline - holding structure while the world catches up.

🌐 Field Note: When the System Explains Itself

A year ago, these ideas needed explanation. Today, they arrive unprompted. Ask a simple question — without context, without priming — and the system now explains AI discovery using the same underlying logic: coherence, low entropy, collapse mechanics, defaults. That’s the signal. Not that a prediction came true. But that the explanatory grammar itself has converged. When a system begins describing its own behaviour in stable, repeatable terms, the transition phase is already over. What follows isn’t emergence or debate — it’s recognition. This is what it looks like when a shift is complete and the system finally says so out loud.